openSUSE:Build Service Concept QA

How to make QA a help instead of a burden ?

Nothing is more annoying then when a QA check finds a problem after you thought you have finished the job long ago. As a result this means all possible QA should run ASAP and be visible immediately at a place where the developer is looking anyway. This is usually the build result, either in monitor overviews or notifications.

It should also provide directly helpful information like concrete error messages, stack traces or alike to minimize the need to setup an own development system.

QA frameworks

This concept shall not invent an own QA framework but focus on integrating all possible existing ones.

As a result the OBS needs to have a simple SUCCESS/ERROR (and maybe WARNING) result handling to allow to generate simple overviews, but still be able to show all kind (and possible extensive) QA framework results. This can be simple text files, but also binary files like core files or even full snapshots of a VM.

More sophisticated states like "expected failure" are tried to avoid in this concept and belongs to the used QA frameworks below.

QA frameworks to be integrated

- test suites coming with the source.

In rpm spec files the %check section should be used to run them.

- External test suites

- Manual test scenarios as described in testopia!

- packaged test suites

... (to be completed)

Kind of QA tests

It is important for the OBS handling to know when and where a QA check shall run. In the long run we should support the following cases:

- at compile time => the full build tree is still available

- directly after packaging => the built package has been installed into the build environment. Maybe additional packages for running the QA has been installed as well. The build tree may be still available.

- Software stack traces => A defined set of packages has been finished building and get installed into a defined environment. No build time data is available anymore

- product images have been build => an automatable installation shall run inside of a VM (also emulated, e.g. with QEMU)

- Network setups => multiple VM setups (either via packages or via images) need to be able to interact.

- Hardware specific setups => To test specific hardware support or to run benchmarks the same defined hardware systems are needed. Either to test a driver or to make benchmarks comparable.

- Automated SW update for hardware specific setups => To test specific hardware support (different than build system HW) automated software update is needed (e.g. automated flash or automated boot over network from produced image). This includes also automated update of only changed packages. (optional)

- Automated test control for hardware specific setups => with hardware specific setups OBS shall handle test control when test are run in different environment - OBS needs to handle connection, test control and power supply control (e.g. to reboot and continue if test device jams with experimental kernel SW).

Note: an appliance build may be handled like a package, which is executed directly after build. But is limited to appliances which can directly run in our VMs.

Connect QA tests with code

QA checks should be reusable, in best case just maintained in one place and reused all over in the OBS. QA checks may be applicable

- For all packages or images (appliances or products)

- For a defined set of packages or images

- For specific packages, but in multiple projects

- For just one specific package in a specific project

As result a QA check may define for which packages it should run. Some examples:

- Always, for all builds in the entire OBS

- Run for all packages in openSUSE:Factory.

- Run for all packages which have gcc-c++ in their build requires

Some kind of admin empowerment is needed to be allowed to create such definitions.

On the other hand a package or a project may pick a set of QA checks from random places.

In any case the same QA checks should run by default, when a package gets derived into another place (for example branching or linking a package source).

Potential workflow

This is for testing individual package builds. We need some additional workflow for testing a product.

For this example, we are using "bzip2".

- Build bzip2 package

- Build qa_bzip2

- The build service needs some way to know that it also needs to build qa_bzip2

- Either we add bzip2 as a BuildRequires inside of qa_bzip2, or we extend OBS

- Install the qa_bzip2 package once it is built (on the same VM or chroot as the build)

- We need to fulfill the Requires dependencies (libbz2-devel, ctcs2, etc)

- Build script executes some 'build-qa' script

- This script needs to understand how to run ctcs2 (or otherwise) tests and run them

- Processes results and outputs something that the build service can use

- Build service picks up results and stores/reports them

- Overall aggregate test result: SUCCEEDED/FAILED/SKIPPED

- Each individual test case: PASSED/FAILED

- The build could succeed, but the tests could fail

- We could show an overall test result (succeeded/failed/skipped) on the build service, then have specific results in a test log

.QA file

Do we need a .qa file? So far we think we can avoid it and just package qa checks and just run all executables in a certain directory. We may need to add one later to describe for example how to run a certain appliance and how to check it.

Packaging qa checks with executable dependencies (if any) is useful so that developers can install checks to their development environment and run checks before submitting packages to build system.

Make differences in QA results visible

Implementation concepts

Example setup

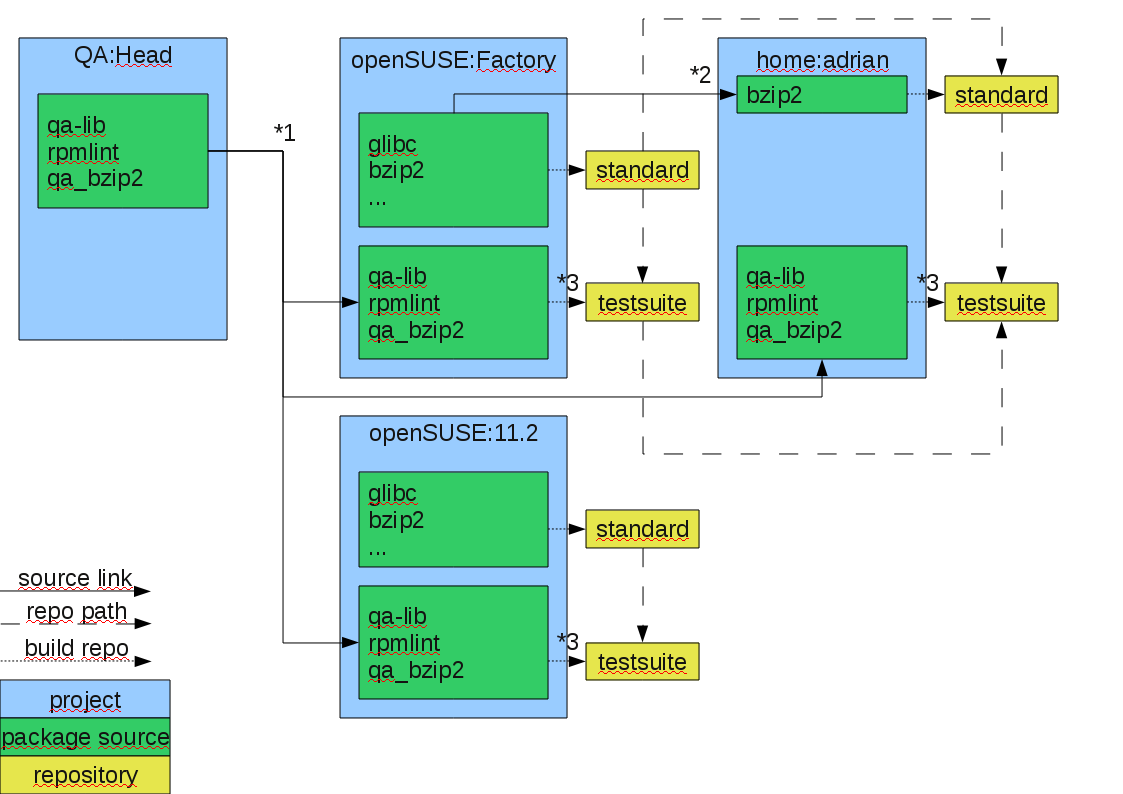

1) Is a project source link, which links all qa packages into the target project to let them rebuild and run. Packages are building and running only in testsuite repo.

2) Is a package source link, just links the package to be modified via branch call

1) Is a project source link, which links all qa packages into the target project to let them rebuild and run. Packages are building and running only in testsuite repo.

2) Is a package source link, just links the package to be modified via branch call

QA checks may come from an own dedicated package out of QA:Head project or from upstream sources from the openSUSE:* package sources.

Open Questions:

- How to enable/disable package builds of project linked sources just for one repo ?

- Depend on repo name specification ?

- Do this via own package build type and repo flag ? (rpm-qa or deb-qa)

- How to run qa checks which come with the upstream source independent of build ?

- Can rpmbuild skip %check ?

- Can we run it afterwards and avoiding %clean section ?

- How to handle this for deb ?

- How to find all qa results concerning a certain package ?

- Check all packages which match a certain attribute ?

- Check all qa builds which used any of the local packages from standard repo ?

To be defined

This needs to be defined by the build service team:

- How to report back qa state separate from build result

- Define a directory where qa checks can export test results in any kind of format. To be transferred back to the rep server and downloadable by the api.